NLPGuard: A Framework for Mitigating the Use of Protected Attributes by NLP Classifiers

Nov 1, 2024·,,,,·

1 min read

Salvatore Greco

Ke Zhou

Licia Capra

Tania Cerquitelli

Daniele Quercia

Image credit: Unsplash

Image credit: UnsplashAbstract

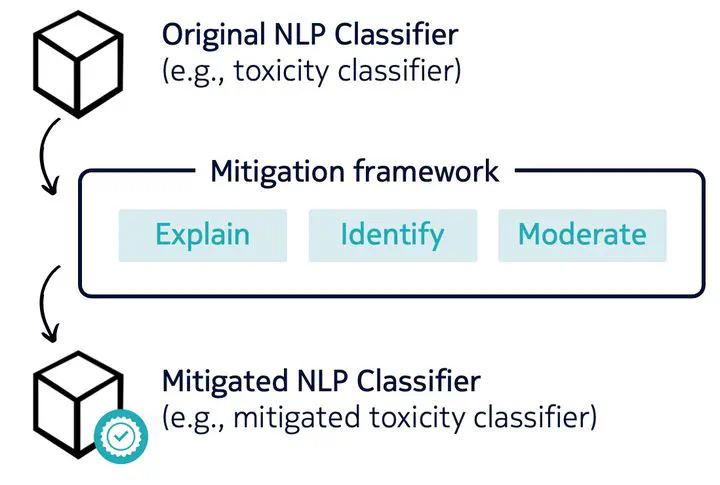

AI regulations are expected to prohibit machine learning models from using sensitive attributes during training. However, the latest Natural Language Processing (NLP) classifiers, which rely on deep learning, operate as black-box systems, complicating the detection and remediation of such misuse. Traditional bias mitigation methods in NLP aim for comparable performance across different groups based on attributes like gender or race but fail to address the underlying issue of reliance on protected attributes. To partly fix that, we introduce NLPGuard, a framework for mitigating the reliance on protected attributes in NLP classifiers. NLPGuard takes an unlabeled dataset, an existing NLP classifier, and its training data as input, producing a modified training dataset that significantly reduces dependence on protected attributes without compromising accuracy. NLPGuard is applied to three classification tasks:\ identifying toxic language, sentiment analysis, and occupation classification. Our evaluation shows that current NLP classifiers heavily depend on protected attributes, with up to 23% of the most predictive words associated with these attributes. However, NLPGuard effectively reduces this reliance by up to 79%, while slightly improving accuracy.

Type

Publication

Proceedings of the ACM on Human-Computer Interaction, Volume 8, Issue CSCW2

Click the Cite button above to demo the feature to enable visitors to import publication metadata into their reference management software.

Create your slides in Markdown - click the Slides button to check out the example.

Add the publication’s full text or supplementary notes here. You can use rich formatting such as including code, math, and images.